What is Feature Engineering ?

Feature engineering is the process of using the domain knowledge to create relevant features in order to make Machine Learning algorithms more accurate. Feature engineering impacts noticeably on the performance of model, If feature engineering performed correctly, this helps the model to perform well.

What is Time Series ?

A time series is a sequence of data points that occur in successive order over some period of time, time series tracks the movements of the chosen data points over specified period of time with data points recorded in regular intervals.

In a time series, the data is captured at equal intervals and each successive data point in the series depends on its past values.

Which are the different types of features in Time- Series ?

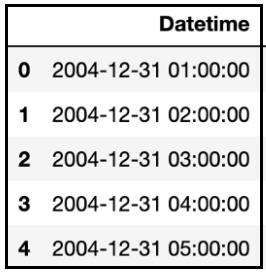

I. Date-time Based Features :

Time series has a Date-time feature from which we can extract various factors like Minute, Hour, Business hour, Weekend, Week days, Seasons, Quarter, Holiday, Leap year, Start of a month, Start of year, End of year and many more, which plays major role in finding the seasonality, trend of the data, and also plays major role in interpreting data.

II. Lag Features :

Lag features are target values from previous period. For an example, if we would like to forecast the sales in period ‘t’, we can use sales of previous month i.e ‘t-1’ as a feature, this would be lag of 1. Similarly, to find sales in period ‘t+2’, we use sales of month ‘t-2’ as a feature and so on. Increasing the lag let’s say up to 4, this will allow the model to make predictions 4 steps ahead.

Lag values chosen will depend on the correlation of individual values with its past values. ACF (Auto correlation Function) and PACF (Partial Function) plots helps to determine the lag at which correlation is significant.

- ACF: The ACF plot is a measure of the correlation between the time series and its lagged version.

- PACF: The PACF plot measures correlation of residuals with the next lag value.

III. Rolling Window Feature :

Rolling window features calculates some statistics based on past values. It is called as the rolling window method because the window would be different for every data point, and it looks like a window that is sliding with every next point.

The Forecast point : Point in time a prediction is being made.

Feature Derivation Window (FDW) : A rolling window, relative to the forecast point.

Forecast Window (FW) : Range of Future values which we wish to predict.

IV. Expanding Window feature :

Its a type of window includes all previous data in series, help in keeping track of the bounds of observable data. Expanding function collects sets of all prior values for each time step, these lists of prior numbers can be summarized and included as new features.

V. Domain Specific Features :

With good domain knowledge, understanding the given problem statement, and final objective of problem, and sufficient knowledge about the given data is enough to engineer the domain specific features of the model.

Since, Feature Engineering plays a vital role in modelling process, feature engineering has to be done efficiently to enhance the predictive power of the model.

References :

[Basic Feature Engineering With Time Series Data in Python (machinelearningmastery.com)

Chapman & Hall/CRC Data Mining and Knowledge Discovery Series — Book Series — Routledge & CRC Press